A “microarray” is a laboratory slide made of glass whose surface is provided with thousands of small pores in defined positions. It works under the principle of hybridization of complementary strands of DNA and enables us to analyze expressions of multiple genes in one reaction in an effective manner.

The data generated through the microarray technology are gathered and saved in a computer with the help of an image scanner. As these data are found in large amounts, it is difficult even for statistical experts to perform the analysis using traditional methods. The problem has turned to be a highly important one to get addressed, especially challenges arising due to the quality and standardization of the data produced by this technology. Thus, bioinformatics tools are invented.

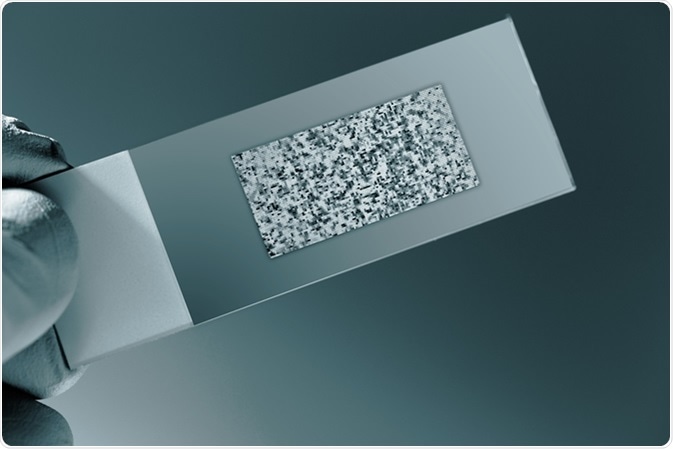

DNA microarray. Image Credit: Science Photo / Shutterstock.com

Bioinformatics

Bioinformatics is the interdisciplinary field of science that is formed by combining other areas like biology, mathematics, computer science, and statistics. The purpose of this technology is to develop methods for storage and recovery of complex biological data as well as their analysis.

In microarray analysis, these dedicated tools perform statistical analysis, sample comparisons, and functional interpretation of the data produced in a series manner after visualization and normalization. Apart from this, by comparing gene expression data with already existing biological information, it provides several kinds of discoveries including analysis of transcription factor binding site, pathway analysis, and network analysis protein-protein interaction.

The “Bioconductor” is one of the important tools used in microarray analysis. It is an open-source and open development software project based on the R programming language.

Application of bioinformatics in microarray analysis:

The resultant data from the microarray technology is analyzed in a process that includes three phases:

- Primary analysis

- Scaling and normalization

- In-depth analysis

a) Primary analysis: In this step, the quality of the data obtained from each array is verified by checking if hybridization, labeling, scanning, etc., are done properly. Here, all the unnecessary and low-quality data are eliminated.

b) Scaling and Normalization: These are the two methods that are involved in regulating the data collected from each arrays. This is done to make the comparison efficient and easier.

Per-chip normalization scaling/per-chip normalization is a method in which the overall fluorescence of each array is adjusted to an average intensity so that the brightness of every sample becomes the same.

Per-gene normalization/normalization is a process in which the sources of variations that can affect the measured expression levels of gene are removed. There are many methods for normalization, but it is difficult to decide which is the best.

c) In-depth analysis is the third step in analyzing microarray data. Based on the nature of the experiment, tests dependent on statistics and filters are applied here to categorize genes whose expressions are modified in various samples. Simple analysis is done for fewer samples while for large numbers of samples, more sophisticated “clustering and classification” is used.

The simplest analysis: Filtering is the method used to analyze the data of fewer samples. “Filter on flags” and “filter on fold change” are the two main approaches used in filtering.

The flag is a qualitative measure that is accompanied by raw expression score. It verifies the statistical differences of the genes from the background and allows filtering of only accurately measurable genes.

‘Filter on fold change’ is a basic filtering method done by comparing fold change. It is used to identify genes that are at least two-fold different in the experimental conditions.

Advanced Analysis: Clustering and classification are the methods that can be used to analyze extremely complex microarray data. However, as the data analyzed by these methods are too large in quantity, it is better to filter the data first and limit it as per the needs.

- Cluster Analysis: This method that involves various supervised/unsupervised techniques of clustering divides the genes into different groups, especially when the sample consists of different types of genes. It is a famous technique used for analyzing data matrix of gene expression

The three common clustering methods are as follows:

- Hierarchical Clustering: An unsupervised technique in which clusters of genes are built with approximately same patterns of expression by grouping genes together that are greatly related in expression measurements. All genes are represented in the form of leaves on a branching tree in the dendrogram.

- K-Means Clustering: This is a data mining algorithm that is used in clustering the data into groups with no prior information on the relationships.

- Self-Organizing Maps (SOM): A neural network-based non-hierarchic clustering approach that works like K-means clustering.

- Classification (class prediction/supervised learning/discriminant analysis): In this method a group of pre-classified examples will be given. In comparison with that, the classifiers will find a new rule, so that the new samples can be assigned into any of the already given classes. The sample number should be sufficient for training an algorithm and to test it on a new group of samples. Gene expression data that are normalized are utilized as input vectors for building classification rules.

Further Reading

Last Updated: Sep 7, 2022